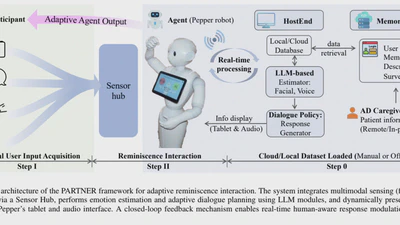

Causal Reinforcement Learning based Agent-Patient Interaction with Clinical Domain Knowledge

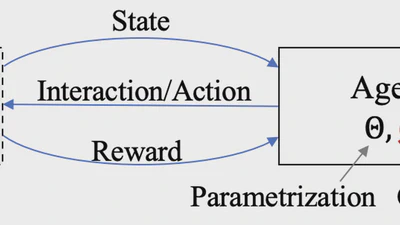

Present a novel framework called Causal structure-aware Reinforcement Learning (CRL) that explicitly integrates causal discovery and reasoning into policy optimization.